signGPT

ASL-to-text interface combining live gesture recognition, image classification, and LLM input

Technologies Used

Project Overview

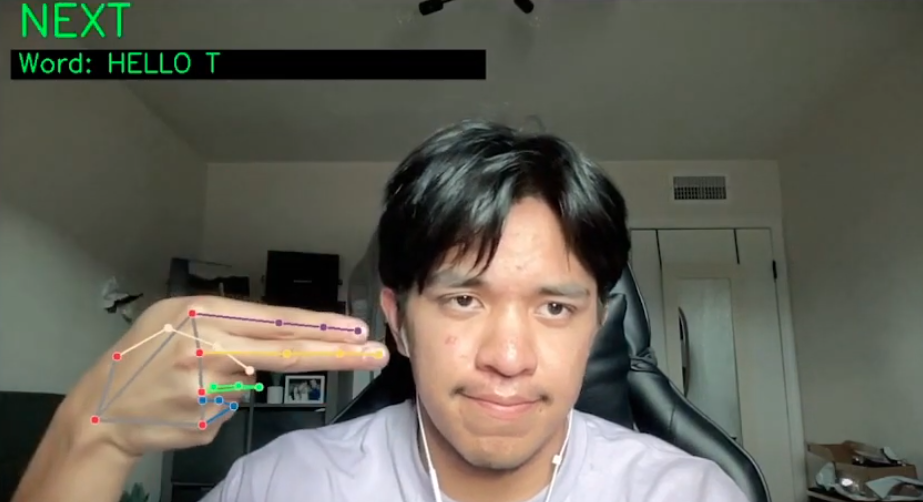

signGPT is a proof-of-concept accessibility system that uses American Sign Language as an input method for conversational AI. It combines image-based ASL classification, live video gesture recognition, and a UI layer that routes recognized signs into an LLM-oriented interface for more inclusive human-computer interaction.

Challenges

Balancing model accuracy with real-time responsiveness while handling gesture variability, live webcam input, and the need for a workflow that could support both uploaded images and live capture.

Solution

The system pairs a ResNet50 plus Transformer image pipeline with a MediaPipe landmark-based live recognizer, then wraps both in a browser-friendly interface for low-latency sign-to-text interaction.

Impact & Results

Reached 95.8% top-1 test accuracy on the image classifier and delivered roughly 30 FPS live recognition, demonstrating the feasibility of ASL as an input layer for AI systems.